Abstract

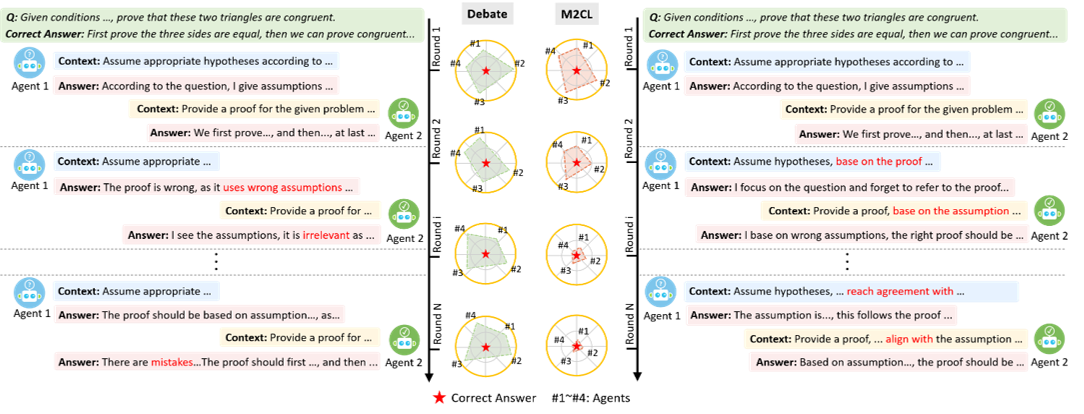

Multi-Agent Discussion (MAD) has garnered increasing attention very recently, where multiple LLM instances collaboratively solve problems via structured discussion. However, we find that current MAD methods easily suffer from discussion inconsistency—LLMs fail to reach a coherent solution—due to the misalignment between their individual contexts. In this paper, we introduce a multi-LLM context learning method (M2CL) that learns a context generator for each agent, capable of dynamically generating context instructions per discussion round via automatic information organization and refinement. Specifically, inspired by our theoretical insights on the context instruction, M2CL trains the generators to control context coherence and output discrepancies via a carefully crafted self-adaptive mechanism. It enables LLMs to avoid premature convergence on “majority noise” and progressively reach the correct consensus. We evaluate M2CL on challenging tasks, including academic reasoning, embodied tasks, and mobile control. The results show that the performance of M2CL significantly surpasses existing methods by 20%--50%, while enjoying favorable transferability and computational efficiency.\footnote{Code is available at \url{https://github.com/HansenHua/M2CL-ICLR26}.}

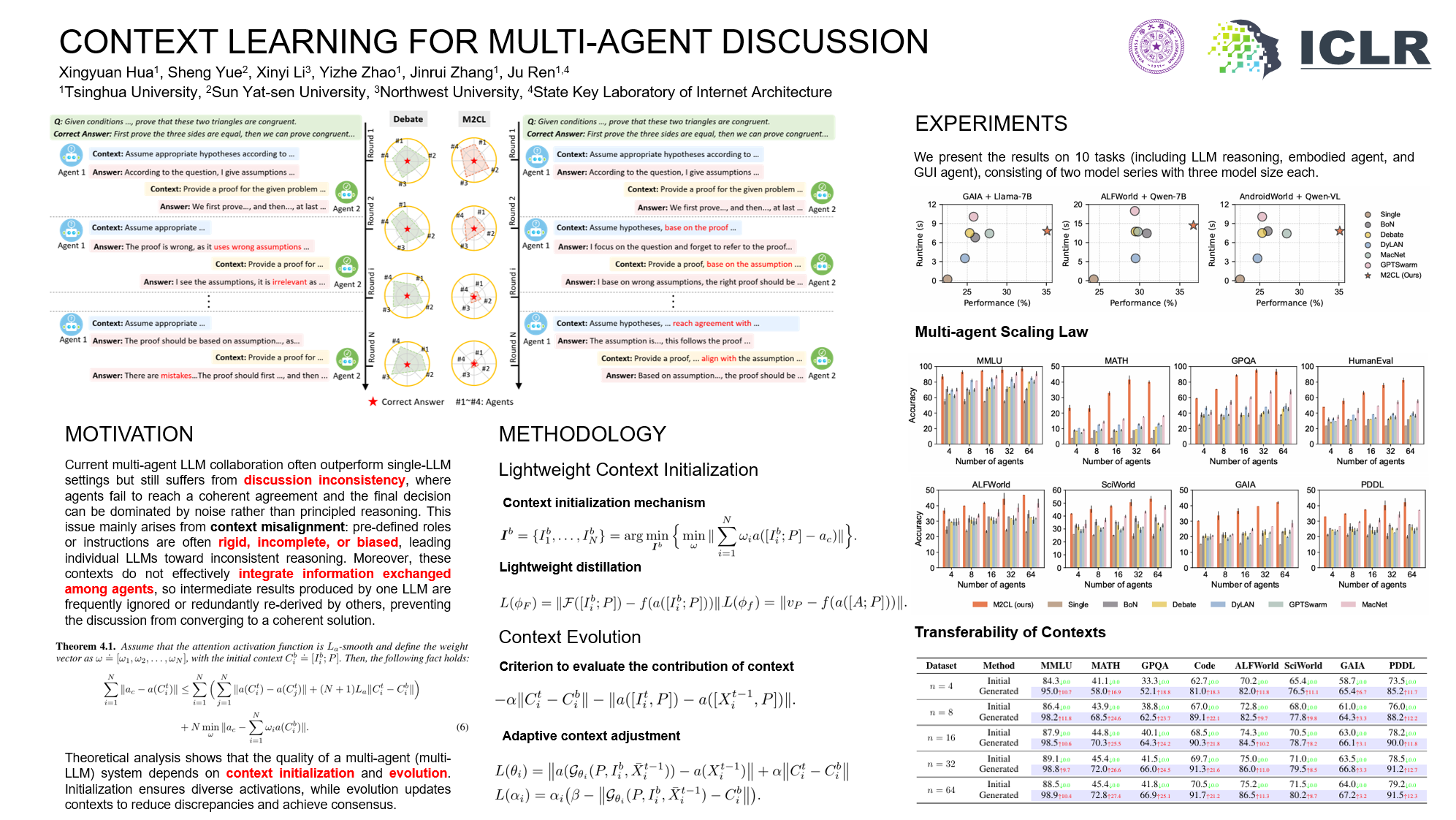

Motivation

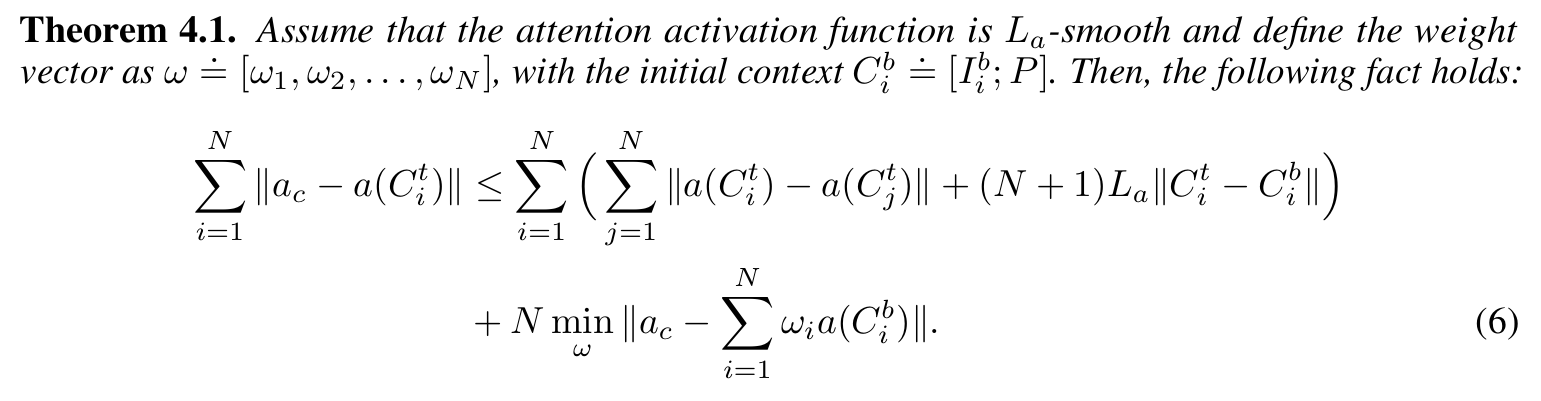

Theoretical analysis shows that the quality of a multi-agent (multi-LLM) system depends on context initialization and evolution. Initialization ensures diverse activations, while evolution updates contexts to reduce discrepancies and achieve consensus.

Methodology

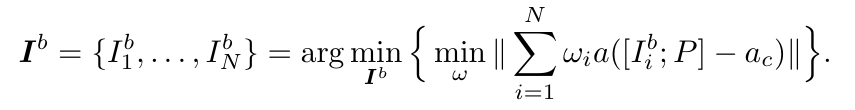

Context initialization

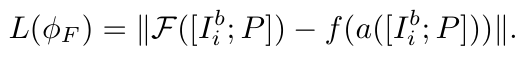

Lightweight distillation

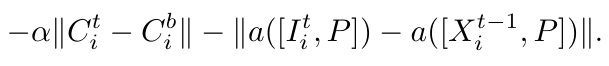

Criterion to evaluate the contribution of context

Adaptive context adjustment

Experiment

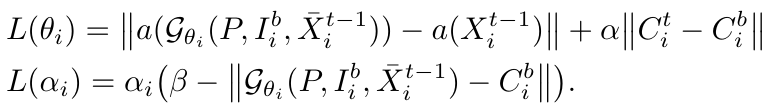

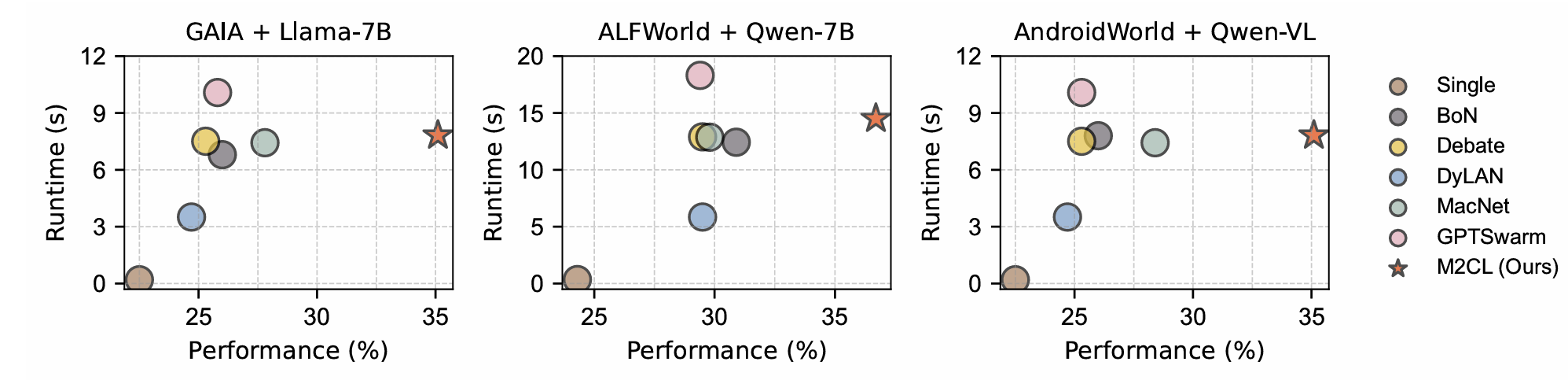

We evaluate M2CL's performance across all datasets, with varying base models and number of LLMs (ranging from 4 to 64). We find M2CL consistently outperforms baselines in all 9 datasets, often by a significant margin in terms of performance. Of note, BoN outperforms most of the baselines especially in complex multi-round agentic tasks, revealing the drawback of fixed contexts, which, despite expanding the exploration space, do not converge and thus hinder LLMs from achieving true cooperative reasoning. In contrast, M2CL can adapt contexts and enhance the relevance of responses and questions while ensuring creativity, indicating that M2CL well avoids that LLMs with different contexts easily influence each other and successfully brings LLMs into cooperation by reaching a consensus through discussion.

We run experiments with varying numbers of LLMs (ranging from 4 to 64). Scaling our method reveals a more efficient scaling law as the performance grows logarithmically before saturation and improves faster than baselines. We speculate this arises because collaborative instructions in our generated contexts enable genuine inter-LLM cooperation, thereby unleashing the multidimensional reasoning capabilities of the MAD.

Poster

BibTeX

@article{hua2026context,

title={Context Learning for Multi-Agent Discussion},

author={Hua, Xingyuan and Yue, Sheng and Li, Xinyi and Zhao, Yizhe and Zhang, Jinrui and Ren, Ju},

journal={arXiv preprint arXiv:2602.02350},

year={2026}

}